As developers, we get as excited as you do about the new solution we’ve built for you, the website we’ve just deployed, or that extra piece of functionality that we’ve just released that saves time for everyone. However, we also know that a fundamentally important part of the lifetime of your solution is the process of keeping it up to date. No matter what technology stack we might have used to build your solution, we know that updates get released for that technology to address security vulnerabilities, reliability, or improve performance.

As part of our commitment to working with you throughout the lifetime of the solution we build, we will endeavour to keep your software secure and efficient by monitoring for updates to the technologies we’ve used during development.

For products built using Microsoft technologies, there are regular monthly updates as improvements are made and issues addressed. On top of that, Microsoft release a new version of the underlying .NET platform every year, usually in November and we’ll look to incorporate those new versions into your software wherever possible. Even if you do not want new features from the latest .NET version as each is supported for around 2-3 years, it is important to remain on the current version as that will bring you a lower total cost of ownership across its lifespan compared to waiting for a version to expire (think little and often).

For WordPress sites, we enrol all clients into our quarterly software update cycle by default with significant versions released approx 3-4 times per year to fix bugs, security issues and add new features as the platform evolves. We also deploy plugin updates which are released in a more ad-hoc fashion by their authors. This is on top of server updates such as an annual PHP (programming language) update and periodic database and server updates. This model helps to extend the lifespan of your investment and it’s not uncommon for a WordPress site to continue to evolve for 10+ years although the desire to use block-based designs has been a watershed moment for many.

For products built using the NextJS stack we again advocate routine quarterly updates. Much like .Net, NextJS provides a new major version every autumn that can require some refactoring of existing code to provide ongoing compatibility, in particular of any external modules which have been used (we always minimise the number of such modules during site construction for this reason). NextJS is often used in conjunction with its NextJS Server Components which are based on NodeJS to offer database and API functionality and this needs to be maintained for compatibility and security, typically NodeJS release a Long Term Support release each Spring which provides 2 years of support.

As part of our support service we’ll liaise with you to identify suitable timings to incorporate updates, addressing security vulnerabilities as early as possible to ensure you and your users can have a high level of confidence in the system you are using.

The digital landscape is shifting faster than ever. To ensure we can keep our clients ahead of the curve, Ant Agar and John Harman headed to the ExCeL London this month for Tech Show London 2026.

Joining 30,000 industry leaders and 450 exhibitors, the event mixes high-level strategy sessions with pioneers like Professor Hannah Fry and Baroness Martha Lane-Fox to technical deep dives with Formula 1 and the NCSC.

The trade stands were a fascinating mix with everything from established multinational data centre power and cooling technology providers (including our client Crane Fluid Systems) through to new start-ups offering services that took some mental gymnastics to even understand, and everything in between. Every visitor was left certain that the current buzzword was AI however.

One of our key purposes of the visit was to help us understand our place, opportunities and threats within the new and rapidly evolving AI-powered landscape that we find ourselves in and we came away both excited and somewhat reassured.

There can be no doubt that businesses and organisations of all shapes and sizes are daunted by the pace and scale of the AI induced change taking place globally. It is possible to enter a mindset of nervousness and uncertainty about what to do, as we all go about our daily lives.

Interestingly, even before the turbulence created by war in the Middle East, we have been seeing share prices surge back and forth in the stock markets, depending on the latest interpretation of what might lie ahead. A recent substack publication by Citrini Research is one such example of the jitters jittering around the globe.

But spending a little time at events like Tech Show London reminded me of the positivity and excitement at this next stage of our evolution. The scale of investment, in infrastructure, capacity, capability and creativity, was laid bare for all to see in stand after amazing stand of state of the art technology.

Attending talks on DevOps automation, national cyber resilience, and evolving working patterns working with AI, I was struck by how quickly the conversation has moved beyond “Chatbots.” The defining theme of 2026 is the rise of “Agentic AI”.

Unlike the generative tools of the past few years, which wait for a human prompt, Agentic AI is proactive. We are moving from a world where we use AI as a tool to one where we collaborate with AI as a teammate.

However, with this “autopilot” capability comes a new challenge – governance. A recurring theme at the show was the “Human-in-the-Loop” (HITL) model. As we delegate more tasks to autonomous agents, the role of leadership shifts from execution to oversight.

We must ensure these systems are ethical, transparent, and built to be secure and reliable. Innovation is gaining pace, but the most successful organisations will be those that pair this incredible technology with human intuition and strategic intent.

The “jitters” may remain in the global headlines, but on the ground in London, the energy was one of pure momentum. The technology to transform our professional and personal lives is being built to last. No longer a distant “maybe”, it is here and the potential for creativity and resilience is staggering.

At Infotex we are embracing this innovation, with a mix of projects ranging from data analysis through making websites work better to re-working how we develop and manage all of our technical inventory.

One of my key focuses was on understanding the impact of AI on security. It can help us to defend, for example with much more accurate identification of legitimate vs. malicious traffic. And it is also also posing threats, such as hyper-personalised AI-generated phishing emails that are hard to defend against. Some of the conversations during the day helped to demonstrate that what we’re seeing is not unique to us, and it’s powerful to realise that similar topics are being played out on national and even international stages, which means there is also a lot of help out there.

It also helped crystallise some existing thoughts as to our technical approach and added new areas for further investigation, indeed we will be following up with some of the potential, and existing suppliers, spoken to during the day.

Unlike some previous buzzwords that have been a central part of past shows, my takeaway is that AI is here for the long haul and set to become a part of how we all do business, buzzword or not.

Our team will be getting out and about at further events across the coming year and we look forward to sharing some of what we learn from these, so watch this space.

Current AI tools can excel at answering questions, summarising information, and providing insights. However, when it comes to moving beyond the conversation to independently gathering data, and taking action, it can fall short.

This is where MCP servers come in, bridging the gap from passive information delivery to active and automated tasks.

MCP is short for Model Context Protocol and is designed to standardise how applications provide context to large language models. The idea is to give different LLMs, such as ChatGPT or Claude, a common way to connect with external systems and expose their capabilities to the AI.

This then makes it possible for models to move beyond a passive Q&A and begin interacting with tools and data sources in a much more structured way. The protocol was introduced by Anthropic as an open standard to make this kind of integration accessible, and is being adopted by Google and OpenAI, the company behind ChatGPT.

While this protocol is very much in its infancy, it does have the scope to revolutionise how we interact with websites and systems.

Imagine you’re planning a holiday. If you asked ChatGPT to “book me a hotel in Paris for three nights starting October 10th,” it can suggest hotels, but it would currently be unable to check live availability, prices, and make the reservation for you.

With MCP in place, the tool could connect to the travel booking systems, fetch real-time prices and availability, compare options, and then actually make the booking on your behalf (with your approval). It could even use what it knows about you, such as food allergies or if you are into fitness, to fine tune its selection.

To help manage your finances, you could ask your AI to pay your electricity bill and transfer £200 to your savings account. The tool would connect to your bank, check the account balance, transfer the money to your savings and schedule your bill payment.

From a business point of view, MCP-enabled tools could allow you to ask “Generate a sales performance report for Q3 2025, compare it with last year, and prepare an email to the executive team”.

You may be familiar with the term API, short for Application Programming Interface. An API allows different systems to ‘talk’ to each other without exposing the entire inner workings of the system. The API defines a set of data formats, rules and functions for other applications to carry out specific actions or request specific information. That sounds similar to an MCP, but an MCP works more like a meta-layer between systems. It can wrap around APIs and other tools to give an AI model structured and contextual access.

We often hear from people who have had their website built elsewhere and while it met the design brief, the site itself is unstable. Our team are deeply technical so we are often able to step in and help, whether that means making changes to the website or a system that underpins it.

In this article, I’m going to trace one particular issue on a website that we built and have managed in a stable state for numerous years. It all started with an image from a colleague who was unable to deploy a change to the website.

“It looks like the website directory has permission issues, any ideas?”

Any server administrator will recognize that this screenshot is a troubling sight. The line of question marks indicates that the system is unable to identify the contents, or even existance, of the website directory. The directory in question is mounted from an external Microsoft Azure Storage account and has been working without issue since the servers were built in 2023.

Further investigation confirmed that this wasn’t just a permissions issue; effectively that directory didn’t exist because the server could no longer access the Microsoft Azure Storage account.

The same was true across the production and staging environments.

The websites hosted on the servers were still running because they operate from a local cache of the files. This meant the cause may not have happened immediately, which is troubling as the failure had been invisible.

The “mount” command confirmed that the server thought that this was still mounted (aka connected) and available; however any attempt to unmount & re-mount it returned an access denied error.

A trawl through the log files for both the server and Azure Storage account confirmed the date of the first seemingly related warning. This seemed to correlate with a third party’s deployment of updates which was to bring the Microsoft Azure account in line with the latest best practice guidance. As the related documentation states:

“The Azure Landing Zones (Enterprise-Scale) architecture provides prescriptive guidance coupled with Azure best practices, and it follows design principles across the critical design areas for organizations to define their Azure architecture. It will continue to evolve alongside the Azure platform and is ultimately defined by the various design decisions that organizations must make to define their Azure journey.”

Looking more closely there had been a number of changes made together. At this point, we started to reverse these changes one at a time until we discovered the cause of the issue. The seemingly innocuous setting “enableHttpsTrafficOnly” had been enabled on all Storage accounts as part of a policy entitled “Enforce-TLS-SSL-H224”.

This meant that Azure is requiring an SSL/TLS encrypted connection but the server is not. While we could disable the HTTPS requirement that would mean that we would no longer be following Azure’s best practice guides despite the connection being within a protected security group making interception highly unlikely.

With this discovery, we “just” need to make the server encrypt the connection and close the ticket. However, the Network File System (NFS) would need to provide the ability to encrypt the connection in a compatible form but it seemingly didn’t.

Fortunately recently Microsoft had published a new “Helper” whose description includes: “can be used to provide a secure communication channel for NFSv4 traffic. This is achieved by implementing TLS encryption for NFS traffic”

By installing this “Helper” and reconfiguring the necessary scripts to use the new connection type we were finally able to re-mount the storage account.

Having done so the web server can once again connect to the Azure Storage Account and put everything back into sync and the developer can finally deploy their changes that started this whole episode.

This issue is a perfect example of why a website needs more than just great design. It needs a technically capable team behind the scenes to support and maintain it. From diagnosing obscure infrastructure changes to implementing secure, standards-compliant solutions, problems like these can easily derail a business if not handled properly.

I have always felt that a lot of business software development misses a very important part: a structured and formal approach to the business rules and requirements of the developed system (the business Domain). I have always felt that there was never really a home for it, or at least I was not sure where it should go; front-end developers tend to mix it with interface concerns, database experts tend to put it in the database, and newcomers have no idea where to put it. As more requirements and features are added to the same code base over time, what we end up with is an unmanageable and confusing mess, often referred to as a big ball of mud.

This approach to software development will introduce errors because it is difficult or impossible to properly test. It degrades developer productivity because bugs are very hard to pin down even when their results can be observed, because it is not obvious where to look. Any added functionality will create an even bigger ball of mud as there is no incentive to not do so. Eventually the software solution becomes unmanageable.

Where appropriate, we follow a Domain Driven Design approach. The Domain components of the solution model the processes and requirements of the actual real-world business processes and requirements. This is done in focused and specific modules that relate to a particular business context – in this way, each module focuses on a specific aspect of the overall solution and does not try and do too much.

These models contain both properties – the current state of the model, and methods – the ability to change the model state through actions. The state of the model should not be externally editable except through these methods and should never become invalid – if an attempt is made to change the model into an invalid state (through a method) that change should fail with the reason. In this way we enforce the business rules of the application.

This works well, and I believe this is especially important for business software, as, often, the requirements will contain lots of complicated business logic, rules and invariants and perhaps state-mandated regulations like GDPR. By attempting to distil this into a single, well-defined layer of the application, it becomes easier to test, easier to maintain and much easier to reason about. Additionally, because the domain model components resist becoming invalid, it should be much more robust and more difficult to break (either intentionally or by accident).

We can then build on and out from the domain components, adding database access and external services, and expose the domain to any software systems that require its services.

There is no such thing as a free lunch – on the flip side, this highly structured software engineering takes much more discipline and consideration. It also requires belief and confidence in the process from all involved and therefore can increase the time and effort it takes to initially deliver the system.

When the Systems Team at Infotex first started developing using Microsoft .NET C#, we followed a Database-First approach. This involved building the database (tables, views, and stored procedures) first using SQL Server. Data Models were then generated in C# to map to the database tables. The database often contained most of the business logic that dictated how the application would work. However, this approach came with challenges. Any changes to the database tables would inevitably lead to breaking changes in the C# code, requiring additional time and effort to ensure synchronisation between the database and application logic.

Over time, we realised that this method had several limitations. The reliance on the database for business logic made the system less flexible and more prone to errors during updates. It also hindered collaboration within teams, as database changes were not as easily tracked or managed compared to code changes. These challenges prompted us to look for a better way to develop and manage our applications.

In recent years, the Systems Team at Infotex has transitioned to a Code-First approach. With this method, we define C# classes, which are then used to create and manage the database schema. This paradigm shift has been transformative for our development process. Code-First development supports Domain-Driven Design (DDD), which moves the core application logic away from the database structure and into the C# classes that we write. This approach ensures that the application’s core functionality is defined within the codebase, making it easier to understand, modify, and maintain.

A critical tool that facilitates Code-First development is Entity Framework. This allows us to manage database schema migrations seamlessly. For example, when we need to add a new field or modify an existing one, Entity Framework enables us to define these changes within the code and apply them to the database with minimal friction. This level of control over how code maps to database tables empowers developers and improves overall efficiency.

While Code-First development offers numerous advantages, it’s not without its challenges. For example, developers need to have a solid understanding of how Entity Framework maps C# classes to database tables. Mistakes in defining relationships or attributes in the code can lead to unexpected issues in the database schema. Additionally, teams transitioning from a Database-First approach may face a learning curve as they adapt to the new methodology.

In this article our Systems Director Gareth uses his experience working within the NHS to look at the challenges that dentist practices have in publishing availability information, as NHS capacity runs scarce.

Access to NHS dental appointments has been in the news again recently following reports of patients being scammed by false advertising on social media platforms asking them to pay to access NHS dentists which turned out to be non-existent. There have also been calls for a website to be built that can inform people whether there are any NHS dentists in their area taking on patients.

There is information about whether a dentist is taking on NHS patients on the service search section of the NHS.uk website but the site itself has a message that says “Some dentists in England are accepting new NHS patients when availability allows”. The reason for that message being displayed before the user sees any results relates to the complexity of working with NHS data and organisations. Although we talk of the NHS as if it is one large institution at the primary care level i.e. GPs, Dentists, Opticians and Pharmacists it is actually a collection of small businesses that are paid to deliver services.

According to the Kings Fund website there are around 11,000 dental practices in England all of which are private businesses and could be individuals or a collective of dentists working together. Unlike for access to GPs there is no requirement to be registered with a particular dentist in order to receive NHS treatment but since only some of those 11,000 dental practices will accept new adult NHS patients at any one time it is easy to see the value of making that information readily available to those in need of treatment some of whom may have been waiting years for a dentist to become available.

So here’s the first potential problem for anyone trying to get this information to patients, there would need to be a mechanism whereby any one of those 11,000 practices can be marked as accepting NHS patients and, at the point that they decide to stop accepting patients, they must then be unmarked so they do not continue to be inundated with requests. As those 11,000 practices are independent businesses they will all maintain their own individual systems, so there is unlikely to be any automated feed of availability to populate a website with the latest information. They may also be a very small business whose priority will be seeing and treating patients, not updating information on a website.

Before the NHS.uk site contained this information I worked in a team who built a local service locator which was able to indicate whether a dentist was taking on new NHS patients. That system relied on communication between a team of commissioners and the dental practices themselves to keep the information up to date. That was acceptable at a local level but to try and keep up with thousands of different practices would be unworkable. The NHS.uk service search relies on each practice to be able to update their own information or for a local team to update information on the dentists in their area.

Having considered some of the issues with obtaining and presenting data about availability of NHS dentistry it is clear that whilst it has some issues in terms of timeliness of the data the NHS.uk service search facility should still be considered the trusted resource for this information and any local initiatives should first consider how best to support the timely update of information on NHS.uk rather than diluting its usefulness by building something independent.

Our team has decades of experience developing systems, if you need help getting your system to work better for you, or to begin the step of digitalising your organisation then we’d love to hear from you.

While some of the hype around AI seems to be slowing from its lofty peaks of 2023, many are finding the real-world practical applications for the technology. For those with ecommerce websites AI can significantly enhance the performance, efficiency, and user experience for visitors.

One of the obvious early benefits for users of the likes of ChatGPT was for its ability to generate content quickly. Inputting some bullet points and asking it to create product descriptions or SEO’d page titles prevented the horrible feeling of staring at a blank page waiting for inspiration.

That idea has now been built-in to the Shopify platform, where AI can be used to help write product descriptions from a basic prompt. For product images, Shopify have also introduced the ability to to transform backgrounds to showcase your product in multiple locations based on your prompt.

Popular SEO WordPress plugin YOAST has AI-powered suggestions for meta descriptions in the paid version of the add-on.

Artificial Intelligence can analyse the user data of people accessing your site helping you to find and retain customers. One of the more involved ways of using AI in ecom is to personalise a user’s shopping experience. Further than just a “welcome back” message or a generic “you may also like”, these tools analyse user behaviour based on past purchases and site browsing history to provided targeted and individually personalised product recommendations. BloomReach’s system allows for custom banners and targeted discounts moving away from site-wide one-size fits all marketing messages.

One element in the sudden rise of AI was the improvement of natural language processing, which is how computers understand humans. Using NLP, AI can interpret the shopper’s query allowing you to more accurately understand their intent and deliver the search results they’re looking for. AI can also enhance search accuracy by adding synonyms, filling in missing words or phrases, and automatically correcting spelling errors. E.g. a search for “sneakers” could be substituted for a search for “trainers”.

As with site personalisation, a user’s on-site behaviour and purchase history can be used to aid with future intent, so when they do use the search on your site you can present items they may be more inclined to want. Klevu’s site search utililses machine learning and natural language processing for advanced configuration options.

Customer Services & Support

Chatbots have been around for a number of years, with many people suffering frustrating customer service encounters with them. However, some of the more modern bots can be almost undetectable from real humans for straightforward enquiries. Intercom.com’s Fin AI agent provides a live help service and pulls in information from across your website to make sure consumers get accurate answers.

Pricing

It takes a lot of time and research to know what to price your products in an ever-changing market. There are AI tools that can analyse market dynamics and competitor pricing to help you find the right price for your products. This can be coupled with other elements such as consumer demand and inventory levels for real-time dynamic pricing.

AI is transforming e-commerce, offering tools that can improve customer experience, personalization, search functionality, and pricing strategies. Even if some tools don’t fit your needs now, staying updated on AI trends ensures you’re ready to adapt and stay competitive as the technology evolves. Monitoring these trends can help you spot new opportunities, streamline operations, and future-proof your business in an ever-changing market.

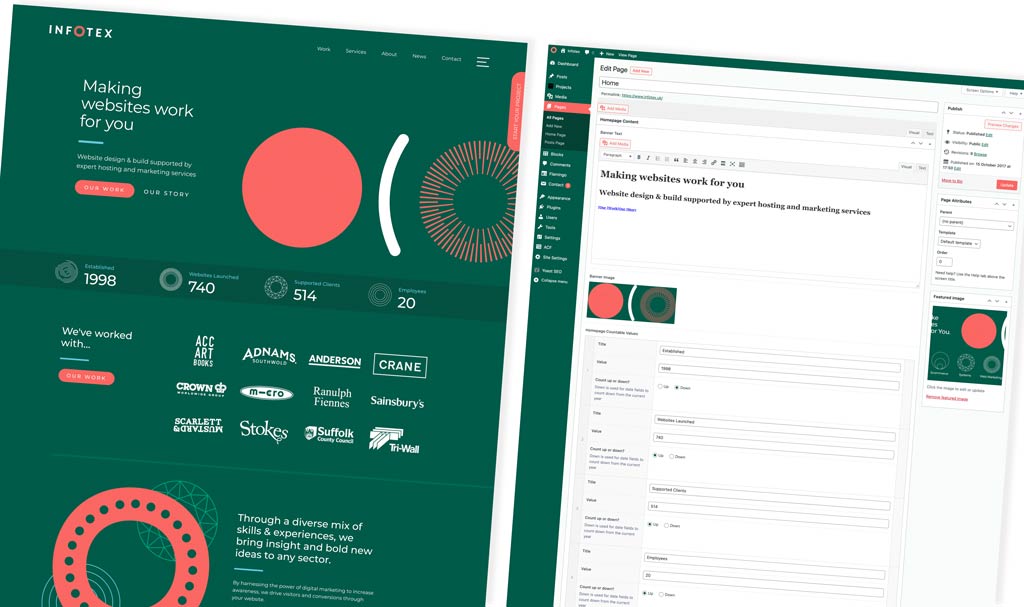

What do you do if you want more control over the front-end but still benefit from the content management capabilities of WordPress? This is where the “headless” approach comes in.

WordPress is a full-featured content management system that enables the creation of standalone websites. That is, a website built on a single codebase with tight integration between all parts of the system (front-end and back-end). Because of this, WordPress can offer rapid development through the use of plugins which can affect both the administration side of the website and the presentation side. The caveat to this is that to gain these benefits, you must write a theme that adheres to the WordPress specification.

Headless refers to the separation of the backend (content storage) from the front-end (dealing with the presentation). A headless CMS allows you to create, edit, and store content but has no way to display that content on its own. The headless CMS will also have an API (Application Programming Interface) that defines how other systems are able to communicate with it in order to access the content.

WordPress does not have a headless mode per se but this can be achieved by hosting WordPress on a domain (or subdomain such as cms.yourwebsite.com), creating a blank theme, and redirecting traffic from the WordPress site to somewhere else (for example, the frontend domain).

In doing this you will have the ability to use the WordPress CMS to create content but it will not be visible anywhere.

There are numerous frontend frameworks available today, written in any number of languages. You could even write the whole website front-end from scratch without a framework (using pure HTML, CSS & JavaScript). However, for its speed, ease of use, and strong development community, our preference is to use NextJS. NextJS is a ReactJS-based front-end framework with lots of modern features like routing, server-side rendering, and static generation. All of these aid in building a website quickly with a focus on performance.

WordPress provides, what is known as, a REST API out-of-the-box which it is perfectly possible to use to fetch posts, pages, tags etc from the WordPress CMS. To gain a bit more control, we can use GraphQL which is a query language for APIs and allows us to dictate what data to return in our API request. This results in faster responses and better privacy (due to unnecessary data being excluded).

There are many approaches to building a modern website and we believe strongly in the capabilities offered by a well-configured WordPress CMS with a well-built integrated theme. For the majority of projects, this can take you a long way and offers compelling value. However, for some projects, or clients, the flexibility offered by the headless approach can produce a fast, scalable solution whilst still leveraging the power of a CMS such as WordPress.

Big or small, data takes up valuable storage space. And once physical storage like hard drives are full, all that data needs to be stored somewhere. This is where the cloud and cloud storage solutions come in. It’s online, on-demand and on the rise globally — so what is the cloud?

The cloud isn’t a place, but a name for the collective online, on-demand availability of services and applications. A global network of remote servers supports and hosts the cloud and allows access to storage wherever you are in the world. Tools like Microsoft OneDrive, Google Drive & Dropbox are great examples of cloud data storage that you’re possibly using already. However, systems exist in the cloud to make processing this data easier — systems such as Software as a Service (Saas) applications, like Zoom, Microsoft 365 or Google Workspace.

Such systems can be split into categories:

Traditional servers are bulky, take up physical space and need advanced cooling, leading to a bigger electricity bill as well as more square footage in your business. By switching to cloud computing and storage, you can save space and money while minimising your environmental impact. But where is the cloud, exactly? Your data is held in one or more data centres, and there are many different ones owned by large companies around the world. Google uses 40 data centre regions globally, Microsoft uses more than 200 centres as of 2023 but Cloudflare has a presence in over 300. The quantity and availability of data centres help the cloud to work as a fast, reliable tool for data storage and processing around the world.

Holding their share of the world’s online data is a big responsibility for all data centres. And for business customers, it’s crucial to know where that data is held and how it is used. We’ve explored cybersecurity in a previous blog, and some of these measures also apply to protecting the cloud. But what specific protections exist to keep the cloud safe? In the UK, the government’s National Cyber Security Centre (NCSC) explains 14 distinct cloud security principles, including:

These security principles give an easy-to-follow framework for any businesses using, accessing or storing data in the cloud. Encrypting data is another huge security aspect for anyone using cloud storage.

The cloud is infinitely flexible and provides businesses with a scalable model for data storage. So even if your needs change, there is always enough data storage in the cloud. As long as you have a reliable internet connection, with appropriate encryption and authentication to keep customer data safe, the opportunities are almost endless.

Keep following our easy data series for more insights.

So you’re thinking of a career in web development, but not sure where to start? We sat down with our Technical Director Christopher Waite to see what advice he’d give to aspiring developers.

Web development offers great flexibility in how you work, either freelance or salaried and almost every industry requires development in some capacity, meaning you have endless options to build a career to suit you.

With the ever changing landscape, there’s always new things to learn so you’ll have the opportunity to push yourself and grow your expertise. That said, here are a few tips to get you thinking and hopefully give you the confidence to start…

When we are working on a website or systems project we may use the terms “front end” and “back end” to describe what we’re working on.

The front end of a website or system is what the users interact with. That could be the images or text that they see on screen to describe a product or the buttons that they click to add something to their basket. The front end is what our designers have produced during their process of creating wireframes and visuals.

Once the designers have created the visuals for the site and the customer has signed them off the development team will move on to converting those designs into HTML (Hyper Text Markup Language) or JavaScript code which are languages used by web browsers to display content on screen and make that content interactive for users. The front end of the website is responsible for sending messages to the back end of the website based on actions that a user has taken e.g. adding a product to a basket or saving their delivery information.

The back end of a website or system is where the information used on the website is stored and processed. Usually there will be a database that holds the information and some code that responds to the messages sent from the front end. For a website project this is often a CMS (Content Management System) such as WordPress which comes with lots of pre-defined functionality and a database structure which we then add to in order to meet the specific needs of a particular project. For a systems project the team will generally create a bespoke back end for the project including writing code and creating a database to hold the information required.

For any website the front end and back end must work in harmony to produce the best outcome. A beautifully designed front end can still result in an unusable website for the client if the back end is too slow or fails to take the right action when a user tries to do something. Equally a smooth, quick back end will not be enough on its own to make a successful website if the front end doesn’t help users to do what they want to do, for example because the layout doesn’t work well on a mobile device.

At Infotex we aim to produce websites and systems that have well designed, mobile responsive front ends and efficient back ends to maximise the experience of users and the better the experience of the users the more likely they are to engage with the website and make a purchase or read the information you want them to.

This article is part of our blog series: Websites 101, lightly introducing and explaining important topics on everything to do with websites, including design, digital marketing, software, infrastructure and beyond.

Got a question you want answered as part of the Websites 101 blog series? Get in touch to let us know.

Discover how our team can help you on your journey.

Talk to us today